Witbe – The Velocity Paradox: AI Accelerates Production, But Who Tests What Gets Shipped?

Yoann Hinard, COO, Witbe

Two years ago, companies were willing to invest in AI just to see what would happen. Budgets flowed into proofs of concept with no defined business case, and “experimentation” was a legitimate line item. That phase is over.

What has replaced it is more interesting and more demanding: AI is now embedded in production workflows, and it is already reshaping how video services are built, localized, and delivered. But the impact is uneven across the content chain and understanding where AI genuinely transforms operations – versus where it remains a solution looking for a problem – matters more than ever.

Where AI Has Already Been Brutal

Some parts of the video content chain have already been permanently altered. Localization, closed captioning, and dubbing have seen intense and rapid change. Companies that built their business around these services are operating in a fundamentally different reality. AI did not incrementally improve their workflows – it restructured them.

Content development has shifted too. Teams use AI-assisted tools for documentation, code generation, and build automation. The result is clear: development cycles that once ran on six-month release schedules now produce multiple releases per day. Teams are leaner, output is higher, and the pace of delivery keeps accelerating.

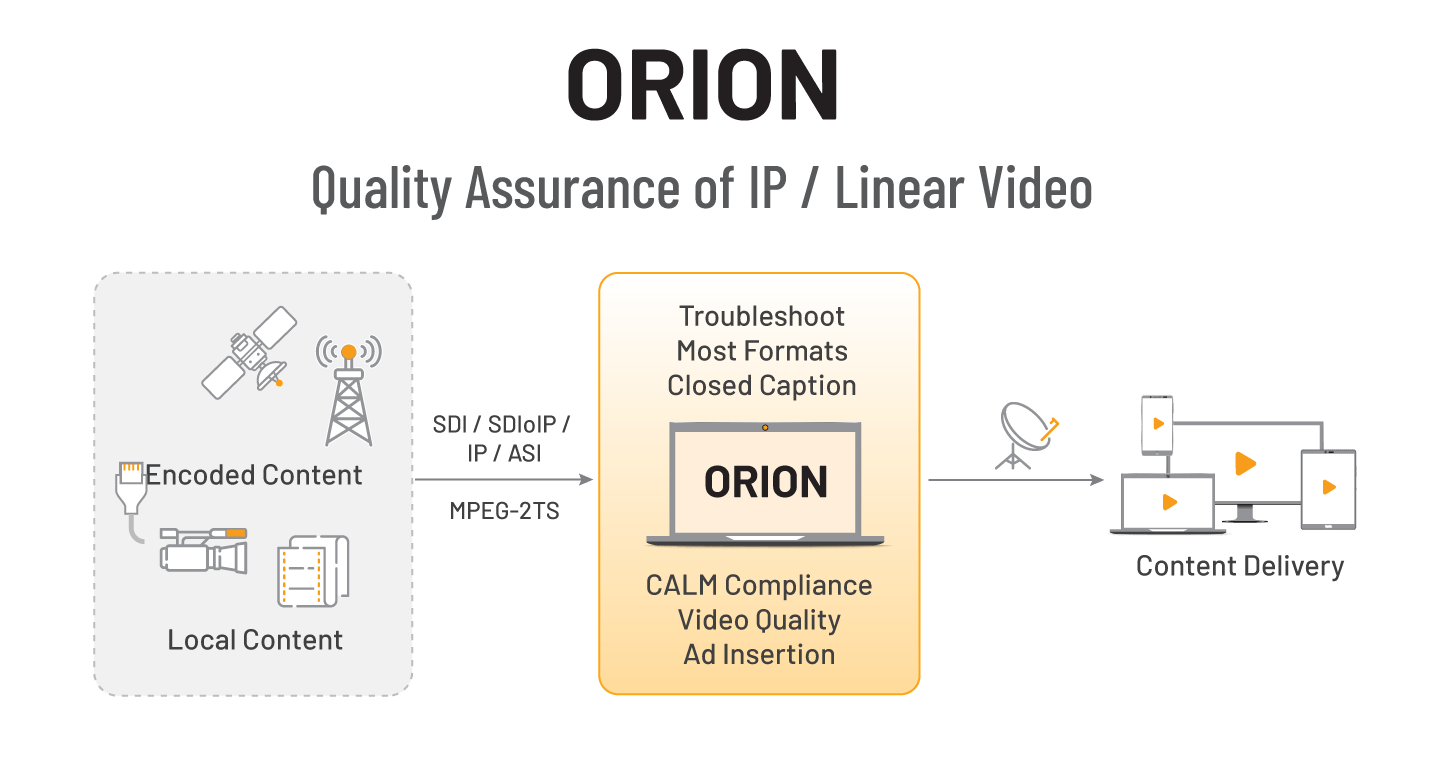

But not everything has changed. For encoding, CDN routing, and delivery infrastructure, AI remains largely experimental. These systems already rely on highly efficient algorithms for load balancing and fast decision-making. Introducing a large language model into those processes would add latency and unpredictability without a clear gain. If it works well with algorithms, there is no business case for replacing it with AI.

The Squeeze At The End of the Chain

This is where the paradox emerges. AI-assisted development means teams ship faster, with fewer people. But a rule of thumb holds across the media industry: the QA team is always a fraction of the development team. If a dev team has ten engineers, QA might have three or four. Nobody invests more in testing a product than in building it.

When development velocity doubles or triples, QA teams face a math problem they cannot solve with headcount. The releases come faster. The device landscape keeps fragmenting – Smart TVs alone now span dozens of operating systems, screen sizes, and firmware versions. And services must work not just in the lab, but in production, on real devices, in real conditions, across regions.

The pressure is compounded by a commercial reality: if a new feature misses a tentpole event like the Super Bowl or the Olympics, the window is gone. Time-to-market is not an abstract metric. It is the difference between capturing an audience and arriving too late.

A Fifteen-Year-Old Problem Meets a New Solution

The idea of automating QA on real devices is not new. For 25 years, Witbe has been running automated test scenarios on actual consumer devices – Smart TVs, set-top boxes, mobile phones – under real user conditions. And for just as long, customers have been asking the same question: can the system learn to navigate an application on its own, without being manually taught every screen and every interaction?

Fifteen years ago, the answer was no. The technology was not there. Five years ago, early machine learning approaches showed promise but lacked the intelligence and cost efficiency to scale. Models were too unreliable and too expensive. A system that failed 50% of the time at a dollar per run had no business case.

What changed in 2024 and 2025 was not a single breakthrough but a convergence: models became more capable, faster, and cheaper. Equally important, the industry learned how to govern AI within operational workflows – when to let it adapt and when to keep validation anchored in deterministic, algorithmic logic.

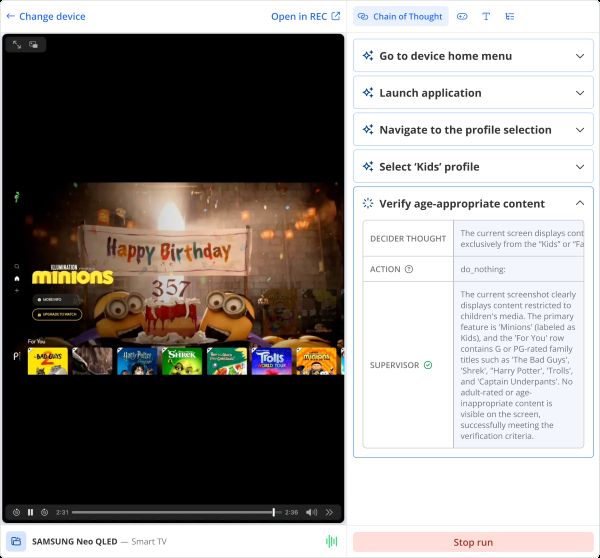

This is the approach Witbe now deploys across its customer base through the Agentic SDK: hybrid automation that combines agentic AI for navigating dynamic user interfaces with algorithmic steps for measuring reliable KPIs – startup time, buffering, video quality. The AI adapts when Netflix changes its layout or when a Smart TV manufacturer updates its operating system. The algorithmic layer ensures the measurements remain consistent and comparable.

Image caption: Agentic AI navigating a streaming application on a Samsung Smart TV – each step is visible, governed, and validated by a supervisor layer.

From Experimentation To Immediate Value

The shift in customer behavior has been striking. Deployment timelines have compressed from months-long proofs of concept to immediate production use. Customers no longer want to evaluate a technology in isolation. They put it in the hands of their QA teams from day one and expect it to generate measurable value within weeks, not quarters.

For tier-two and tier-three operators – often running lean technical teams across large geographic footprints – this matters even more. A Canadian operator with 60 locations and a small technical staff does not have time for a prolonged evaluation. They need technology that works when plugged in and that non-technical staff can operate without months of training. With agentic automation, a content team can verify that Super Bowl highlights appear correctly on the homepage by stating what to check in plain language. The system understands the instruction and executes it.

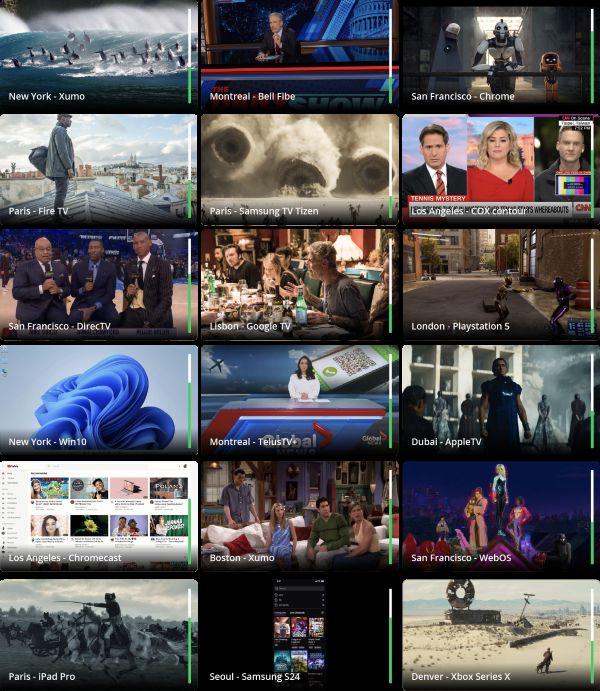

Image Caption: Witbe’s Remote Eye Controller (REC) monitoring real devices across six countries simultaneously – from Fire TV and Apple TV to PlayStation 5 and iPad Pro.

What Automation Actually Changes

The conversation about AI and jobs often defaults to replacement narratives. The reality in video QA is different. AI is not eliminating testing roles – it is changing what those roles focus on. When routine regression testing runs automatically across dozens of devices, QA engineers spend their time on higher-value work: defining quality standards, interpreting edge cases, and making go/no-go decisions on production releases with better data.

The velocity paradox does not resolve by slowing down development. It resolves by making quality assurance as fast and as scalable as the production pipeline it serves. For an industry that ships more frequently, to more devices, in more markets than ever before, that is not a theoretical benefit. It is an operational necessity.

Yoann Hinard is COO of Witbe, a technology company specializing in AI-powered real-device testing and monitoring for streaming services. Witbe is trusted by over 300 leading brands across 50+ countries. Learn more at www.witbe.net

Sign-Up Here

Industry news, event updates and more. Sign-up for the IAMT Newsletter.