Saranyu – Scaling Short-Form Video for Global Audiences

Suresh B G, Chairman, Founder, Saranyu Technologies Pvt. Ltd. India

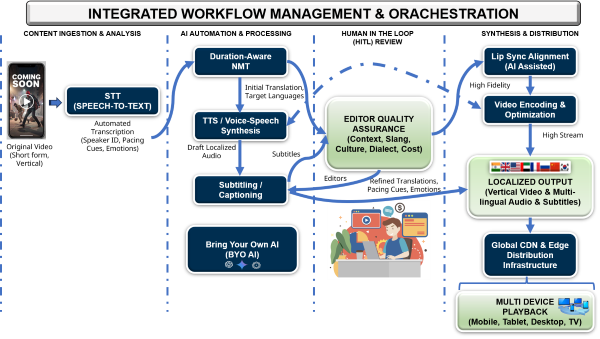

Executive Summary: As short-form video and micro-drama formats spread globally, media companies must rapidly repurpose and localize content for new audiences. AI-driven orchestration workflows are transforming transcription, translation, and dubbing into pipelines enabling faster, lower-cost international distribution.

The global video ecosystem is rapidly shifting toward shorter, mobile-first content experiences. Platforms such as TikTok, Instagram Reels, and YouTube Shorts have shown that short-form video can attract massive audiences and sustain high engagement. TikTok alone surpassed one billion active users globally, and short-form formats now represent a growing share of daily video consumption across social and streaming platforms. For younger audiences especially, video is expected to be quick to consume, easy to discover, and continuously available.

Out of this environment has emerged a storytelling format often described as micro-drama—short episodic narratives designed specifically for mobile viewing. Unlike purely user-generated clips, micro-dramas combine professional storytelling with short-form production techniques. Episodes typically run between 30 seconds and a few minutes, forming serialized narratives that encourage continuous viewing across multiple segments.

The Rise of the Micro-Drama Ecosystem

Micro-drama formats are gaining traction across global markets. In India, audiences increasingly consume short-form dramas produced in China and South Korea. In Africa and parts of Southeast Asia, short clips derived from Bollywood films circulate widely across social platforms and OTT services. Meanwhile, U.S. streaming platforms have begun experimenting with similar formats adapted for Western audiences.

Dedicated platforms such as DramaBox and ReelShort, which distribute serialized mobile-first dramas, have generated billions of views worldwide, demonstrating the commercial viability of this storytelling model.

These cross-border content flows highlight a broader shift: short-form video travels globally far more easily than traditional long-form programming. For content owners and creative teams, this creates both opportunity and complexity. While short-form formats open new engagement channels, scaling them internationally requires faster production cycles and new localization workflows.

Repurposing existing content has become a key strategy. Long-form programs can be transformed into highlights, curated clips, or serialized micro-episodes. Podcasts can be converted into visual segments, while archival libraries can be mined for moments that resonate with social audiences. These approaches extend the value of existing media assets while creating formats better suited to mobile viewing.

Localization is equally critical. Content that resonates in one language must often be translated, subtitled, or dubbed to reach global audiences. A single video may require multiple language versions to maximize distribution, and subtitles alone can significantly expand accessibility.

Orchestrating the Localization Pipeline

AI is increasingly enabling scalable localization. Speech-to-text (STT) systems transcribe dialogue, translation models convert that dialogue into new languages, and text-to-speech (TTS) engines generate localized audio tracks.

However, effective localization requires more than linking AI tools together. Media organizations need orchestrated workflows that combine automation with editorial oversight.

A typical pipeline begins with automated transcription of the original dialogue. Modern STT systems identify speakers, segment conversations, and capture emotional cues. Translation models then adapt the dialogue for the target language.

Effective translation must be duration-aware, rewriting meaning so that the translated dialogue fits naturally within the timing of the original video. Literal translation often produces awkward dubbing because spoken pacing differs across languages.

Human review remains essential. Editors refine translations to address slang, dialects, acronyms, and hybrid language usage such as “Hinglish.” They may also insert pacing or emotion cues that guide voice generation.

Once the script is finalized, text-to-speech engines generate the localized audio track. At this stage, precise timing becomes critical. A timing-accurate dubbing engine must align generated speech with scene cuts, pauses, and speaker transitions so that the new audio integrates seamlessly with the original video.

Recent advances in voice cloning and lip-sync technologies are further improving localization quality. Voice cloning allows characters or presenters to maintain consistent vocal identities across languages and episodes. Lip-sync technologies can adjust speech timing—or subtly adapt mouth movements—so that dubbed dialogue aligns more closely with the original performance. These techniques help translated content feel natural and emotionally engaging for global audiences.

Responsible deployment is also becoming important. Many organizations now adopt consent-based voice cloning frameworks, ensuring voice models are created only with explicit permission from actors or presenters. This helps address ethical concerns while maintaining transparency in AI-assisted production workflows.

Figure 1. Orchestrated AI Video Localization Pipeline

Modern orchestration architectures are emerging to manage this complexity. Platforms such as MAGMA Studio, developed by Saranyu, illustrate how these principles can be applied in practice by coordinating transcription, translation, dubbing, and review processes within a unified workflow.

A key architectural principle is modularity. Through approaches such as Bring-Your-Own-AI (BYOAI), organizations can integrate their preferred language models, translation engines, or voice synthesis technologies rather than being locked into a single vendor stack. This flexibility allows broadcasters to adopt new AI capabilities as they evolve.

The New Economics of Global Scale

The economics of localization are also changing rapidly. Traditional dubbing workflows often cost hundreds of dollars per finished minute and can take weeks to produce across multiple languages.

AI-assisted localization pipelines can dramatically reduce both cost and turnaround time. In many cases, localization expenses drop by 60–80 percent, while new language versions can be generated in hours rather than weeks. These efficiencies are essential when managing large volumes of short-form content.

Delivering short-form video at scale also requires efficient distribution infrastructure. Vertical videos are typically consumed in rapid sequences, so applications must transition smoothly between clips with minimal buffering. Intelligent caching and pre-loading help maintain seamless playback.

Efficient encoding strategies also play a role when distributing millions of short clips daily. AI-assisted video optimization can adjust compression settings based on clip complexity, helping balance visual quality with bandwidth efficiency while reducing CDN costs.

As short-form video reshapes the media landscape, the ability to repurpose, localize, and distribute content efficiently will become a competitive advantage. By combining AI-driven automation with orchestrated workflows and human oversight, media organizations can scale storytelling across languages and regions—unlocking new audiences and extending the value of content libraries in the evolving world of short-form video.

Sign-Up Here

Industry news, event updates and more. Sign-up for the IAMT Newsletter.