Wowza – When Video Becomes Operational Infrastructure: What’s Changing in Enterprise AV

Krish Kumar, CEO of Wowza

For those of us who have spent a long time in media and streaming, the core challenges used to be relatively well understood: how to get video from point A to point B, how to scale delivery, and how to maintain quality under load.

Those challenges still matter, but they are no longer the center of gravity.

What’s happening now is that video is showing up across more parts of the business, and is directly shaping critical decisions. Cameras are embedded across cities, infrastructure, and facilities, running continuously in environments where conditions are unpredictable. The number of devices capturing video continues to grow, from surveillance and mobile cameras to drones, vehicles, and industrial systems, contributing to expansion in how much visual data organizations are managing.

Teams are managing more streams than they were even a year ago, and the volume of video moving through their systems keeps increasing. At the same time, that video is being used to make decisions, coordinate teams, meet compliance requirements, and understand what’s happening in real time.

What We’re Seeing Across Enterprise Deployments

From our work with thousands of enterprise customers, a few patterns are consistent:

- Video is becoming a source of truth for operations, not just a record of what happened

Camera deployments are scaling from hundreds to thousands of feeds per environment - Regulated industries are driving adoption, especially transportation, healthcare, and public safety

- Systems are required to run in hybrid, on-prem, or isolated environments

- Buyers are shifting toward developers, architects, and compliance teams building video into applications

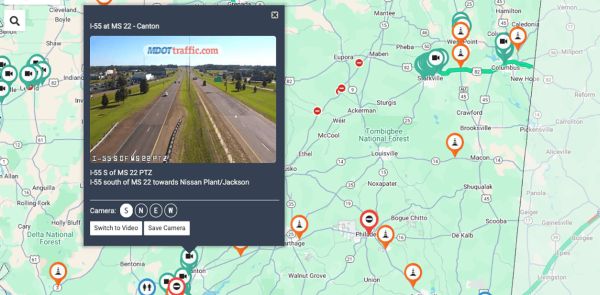

Transportation teams are managing live roadway conditions during severe weather, using video feeds to decide where to deploy resources and how to route emergency services. Industrial operators are monitoring remote environments and guiding decisions without being physically present. Security and defense teams are maintaining continuous visibility across distributed environments where failure is not an option.

In our customer base, these requirements show up at scale. In offshore operations, teams are working with bandwidth in the low megabit range, and feeds are often unstable. Operators are making decisions off partial or degraded video. The system has to stay usable even when conditions aren’t, because waiting for a clean signal isn’t an option.

In transportation, one state department of transportation is using live video and AI across more than a thousand cameras to support real-time visibility into traffic conditions and routing decisions, building their own on-prem GPU infrastructure to run workloads locally. The continuous visibility allows them to push updates and manage congestion as it develops. Expanding a highway can run around $5 million per lane mile, so having constant visibility into what’s happening on the road gives them a way to delay expansion and avoid costs.

Across these environments, the expectation is consistent. Video is not separate from the operation. It is part of how decisions get made.

Where the Model Starts to Break

As deployments scale, a practical challenge shows up quickly.

Teams are responsible for hundreds or thousands of live feeds, and there is no realistic way to watch all of them continuously. Expanding visibility does not solve the problem. It increases the amount of information without improving the ability to act on it.

At that point, the focus shifts from delivery to attention.

Organizations are looking for ways to surface the moments that matter, whether that is a stalled vehicle, equipment behaving unexpectedly, or activity in a restricted area. The goal is to make it clear where to look and when to act.

From Video Feeds to Video Intelligence

At a certain scale, the model of adding more cameras starts to break. Once teams are responsible for hundreds or thousands of feeds, attention becomes the limiting factor. There’s no practical way to watch everything, and the volume of video keeps increasing. Teams end up sorting through more information in real time, often under pressure, with no ability to go back and rewatch every moment.

Teams care about whether they can quickly understand what’s happening and decide what to do next.

This is the shift toward video intelligence.

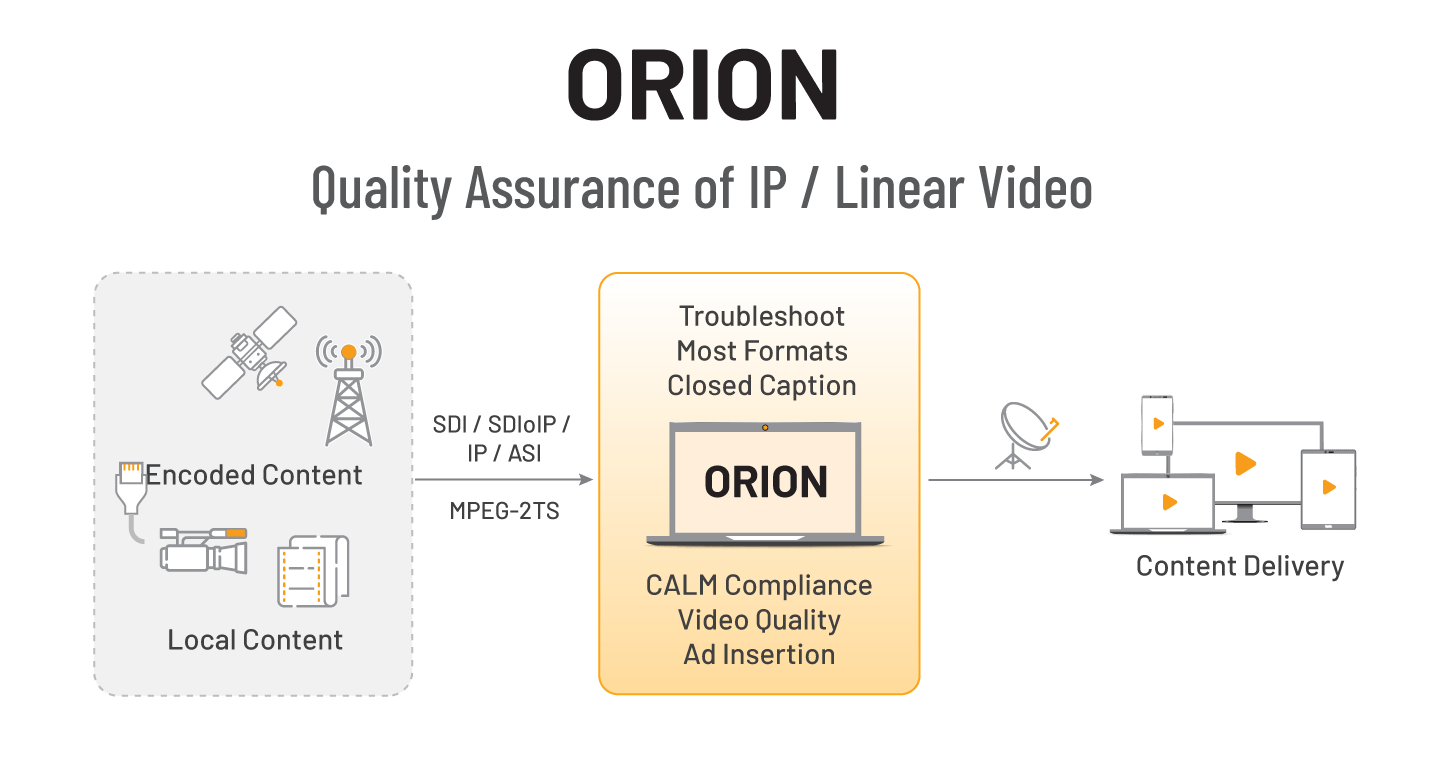

Instead of treating video as something to move and store, organizations are starting to treat it as something that can be interpreted and connected to their systems. AI plays a role here, embedded within the pipeline to turn live video into information that fits into how teams operate.

For example, our offshore operations customers are monitoring remote sites over links that can drop down into the 256 kbps–2 Mbps range, pulling RTSP feeds into a central system to guide decisions in real time. Traditionally, operators would keep multiple feeds open, watch for anything unusual, and manually trigger a recording if something looked off. If missed, the only option was to go back later and piece together what happened.

Now, they expect the system to ingest feeds and surface immediately when something changes, such as activity in the wrong area or equipment behaving outside normal patterns.

Why Architecture Is Being Reconsidered

Once video becomes part of operational workflows, the underlying architecture has to support it.

Many enterprise video systems have grown incrementally, with separate components for ingest, processing, and delivery. As expectations increase, that approach becomes harder to maintain, especially across different environments.

Organizations are rethinking architecture around a few principles:

-Processing video closer to where it is captured to reduce data movement and cost

-Supporting hybrid deployments across cloud, on-prem, and edge

-Maintaining operation when connectivity is limited

-Connecting video directly to operational workflows and systems

These decisions are shaped by both performance and economics. Continuous streams and real-time processing introduce bandwidth and compute demands that require more deliberate design.

Where This Is Heading

For those coming from a traditional media background, this represents an expansion of what enterprise AV is expected to support.

Video is becoming part of the operational layer across transportation, public safety, industrial systems, and beyond. It is expected to be available, reliable, and integrated into systems that drive decisions.

As that continues, platforms will evolve to not just move video, but translate it into something that can be understood and acted on in real time.

Video has always helped people understand what’s happening. What’s changing is that it’s starting to shape what happens next. Organizations that treat it as part of their operational infrastructure are starting to see the difference.

Sign-Up Here

Industry news, event updates and more. Sign-up for the IAMT Newsletter.